Hello Object Detection¶

This Jupyter notebook can be launched on-line, opening an interactive environment in a browser window. You can also make a local installation. Choose one of the following options:

A very basic introduction to using object detection models with OpenVINO™.

The

horizontal-text-detection-0001

model from Open Model

Zoo is used. It

detects horizontal text in images and returns a blob of data in the

shape of [100, 5]. Each detected text box is stored in the

[x_min, y_min, x_max, y_max, conf] format, where the

(x_min, y_min) are the coordinates of the top left bounding box

corner, (x_max, y_max) are the coordinates of the bottom right

bounding box corner and conf is the confidence for the predicted

class.

Table of contents:¶

# Install openvino package

%pip install -q "openvino>=2023.1.0"

Note: you may need to restart the kernel to use updated packages.

Imports¶

import cv2

import matplotlib.pyplot as plt

import numpy as np

import openvino as ov

from pathlib import Path

# Fetch `notebook_utils` module

import urllib.request

urllib.request.urlretrieve(

url='https://raw.githubusercontent.com/openvinotoolkit/openvino_notebooks/main/notebooks/utils/notebook_utils.py',

filename='notebook_utils.py'

)

from notebook_utils import download_file

Download model weights¶

base_model_dir = Path("./model").expanduser()

model_name = "horizontal-text-detection-0001"

model_xml_name = f'{model_name}.xml'

model_bin_name = f'{model_name}.bin'

model_xml_path = base_model_dir / model_xml_name

model_bin_path = base_model_dir / model_bin_name

if not model_xml_path.exists():

model_xml_url = "https://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.3/models_bin/1/horizontal-text-detection-0001/FP32/horizontal-text-detection-0001.xml"

model_bin_url = "https://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.3/models_bin/1/horizontal-text-detection-0001/FP32/horizontal-text-detection-0001.bin"

download_file(model_xml_url, model_xml_name, base_model_dir)

download_file(model_bin_url, model_bin_name, base_model_dir)

else:

print(f'{model_name} already downloaded to {base_model_dir}')

model/horizontal-text-detection-0001.xml: 0%| | 0.00/680k [00:00<?, ?B/s]

model/horizontal-text-detection-0001.bin: 0%| | 0.00/7.39M [00:00<?, ?B/s]

Select inference device¶

select device from dropdown list for running inference using OpenVINO

import ipywidgets as widgets

core = ov.Core()

device = widgets.Dropdown(

options=core.available_devices + ["AUTO"],

value='AUTO',

description='Device:',

disabled=False,

)

device

Dropdown(description='Device:', index=1, options=('CPU', 'AUTO'), value='AUTO')

Load the Model¶

core = ov.Core()

model = core.read_model(model=model_xml_path)

compiled_model = core.compile_model(model=model, device_name="CPU")

input_layer_ir = compiled_model.input(0)

output_layer_ir = compiled_model.output("boxes")

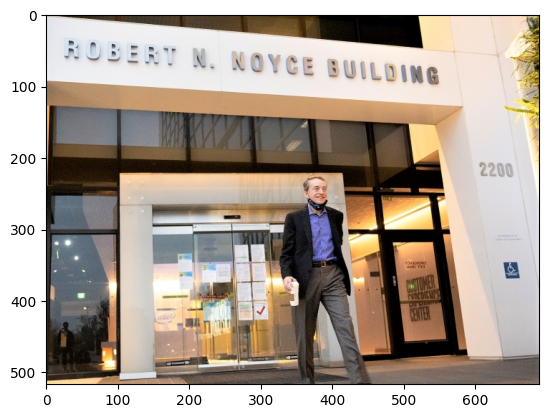

Load an Image¶

# Download the image from the openvino_notebooks storage

image_filename = download_file(

"https://storage.openvinotoolkit.org/repositories/openvino_notebooks/data/data/image/intel_rnb.jpg",

directory="data"

)

# Text detection models expect an image in BGR format.

image = cv2.imread(str(image_filename))

# N,C,H,W = batch size, number of channels, height, width.

N, C, H, W = input_layer_ir.shape

# Resize the image to meet network expected input sizes.

resized_image = cv2.resize(image, (W, H))

# Reshape to the network input shape.

input_image = np.expand_dims(resized_image.transpose(2, 0, 1), 0)

plt.imshow(cv2.cvtColor(image, cv2.COLOR_BGR2RGB));

data/intel_rnb.jpg: 0%| | 0.00/288k [00:00<?, ?B/s]

Do Inference¶

# Create an inference request.

boxes = compiled_model([input_image])[output_layer_ir]

# Remove zero only boxes.

boxes = boxes[~np.all(boxes == 0, axis=1)]

Visualize Results¶

# For each detection, the description is in the [x_min, y_min, x_max, y_max, conf] format:

# The image passed here is in BGR format with changed width and height. To display it in colors expected by matplotlib, use cvtColor function

def convert_result_to_image(bgr_image, resized_image, boxes, threshold=0.3, conf_labels=True):

# Define colors for boxes and descriptions.

colors = {"red": (255, 0, 0), "green": (0, 255, 0)}

# Fetch the image shapes to calculate a ratio.

(real_y, real_x), (resized_y, resized_x) = bgr_image.shape[:2], resized_image.shape[:2]

ratio_x, ratio_y = real_x / resized_x, real_y / resized_y

# Convert the base image from BGR to RGB format.

rgb_image = cv2.cvtColor(bgr_image, cv2.COLOR_BGR2RGB)

# Iterate through non-zero boxes.

for box in boxes:

# Pick a confidence factor from the last place in an array.

conf = box[-1]

if conf > threshold:

# Convert float to int and multiply corner position of each box by x and y ratio.

# If the bounding box is found at the top of the image,

# position the upper box bar little lower to make it visible on the image.

(x_min, y_min, x_max, y_max) = [

int(max(corner_position * ratio_y, 10)) if idx % 2

else int(corner_position * ratio_x)

for idx, corner_position in enumerate(box[:-1])

]

# Draw a box based on the position, parameters in rectangle function are: image, start_point, end_point, color, thickness.

rgb_image = cv2.rectangle(rgb_image, (x_min, y_min), (x_max, y_max), colors["green"], 3)

# Add text to the image based on position and confidence.

# Parameters in text function are: image, text, bottom-left_corner_textfield, font, font_scale, color, thickness, line_type.

if conf_labels:

rgb_image = cv2.putText(

rgb_image,

f"{conf:.2f}",

(x_min, y_min - 10),

cv2.FONT_HERSHEY_SIMPLEX,

0.8,

colors["red"],

1,

cv2.LINE_AA,

)

return rgb_image

plt.figure(figsize=(10, 6))

plt.axis("off")

plt.imshow(convert_result_to_image(image, resized_image, boxes, conf_labels=False));