OpenVINO 2023.3¶

See latest benchmark numbers for OpenVINO and OpenVINO Model Server

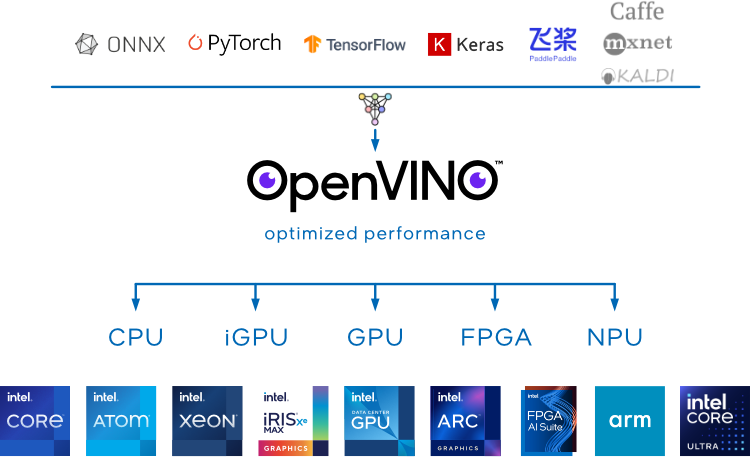

OpenVINO supports different model formats: PyTorch, TensorFlow, TensorFlow Lite, ONNX, and PaddlePaddle.

Cloud-ready deployments for microservice applications

Boost performance using quantization and compression with NNCF

Optimize generation of the graph model with PyTorch 2.0 torch.compile() backend

Enhance the efficiency of Generative AI

Feature Overview¶

You can either link directly with OpenVINO Runtime to run inference locally or use OpenVINO Model Server to serve model inference from a separate server or within Kubernetes environment

Write an application once, deploy it anywhere, achieving maximum performance from hardware. Automatic device discovery allows for superior deployment flexibility. OpenVINO Runtime supports Linux, Windows and MacOS and provides Python, C++ and C API. Use your preferred language and OS.

Designed with minimal external dependencies reduces the application footprint, simplifying installation and dependency management. Popular package managers enable application dependencies to be easily installed and upgraded. Custom compilation for your specific model(s) further reduces final binary size.

In applications where fast start-up is required, OpenVINO significantly reduces first-inference latency by using the CPU for initial inference and then switching to another device once the model has been compiled and loaded to memory. Compiled models are cached improving start-up time even more.