Low-precision 8-bit inference is optimized for:

A lot of investigation was made in the field of deep learning with the idea of using low precision computations during inference in order to boost deep learning pipelines and gather higher performance. For example, one of the popular approaches is to shrink the precision of activations and weights values from fp32 precision to smaller ones, for example, to fp11 or int8. For more information about this approach, refer to Brief History of Lower Precision in Deep Learning section in this whitepaper.

8-bit computations (referred to as int8) offer better performance compared to the results of inference in higher precision (for example, fp32), because they allow loading more data into a single processor instruction. Usually the cost for significant boost is a reduced accuracy. However, it is proved that an accuracy drop can be negligible and depends on task requirements, so that the application engineer can set up the maximum accuracy drop that is acceptable.

Let's explore quantized TensorFlow* implementation of ResNet-50 model. Use Model Downloader tool to download the fp16 model from OpenVINO™ Toolkit - Open Model Zoo repository:

After that you should quantize model by Model Quantizer tool.

The simplest way to infer the model and collect performance counters is C++ Benchmark Application.

If you infer the model in the OpenVINO™ CPU plugin and collect performance counters, all operations (except last not quantized SoftMax) are executed in INT8 precision.

For 8-bit integer computations, a model must be quantized. Quantized models can be downloaded from Overview of OpenVINO™ Toolkit Intel's Pre-Trained Models. If the model is not quantized, you can use the Post-Training Optimization Tool to quantize the model. The quantization process adds FakeQuantize layers on activations and weights for most layers. Read more about mathematical computations in the Uniform Quantization with Fine-Tuning.

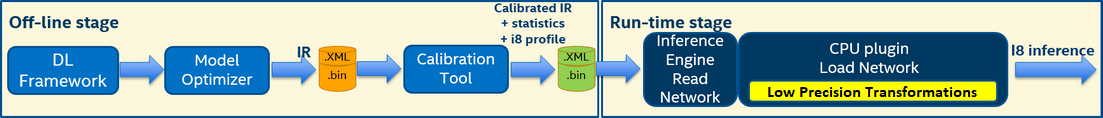

8-bit inference pipeline includes two stages (also refer to the figure below):

Offline stage, or model quantization. During this stage, FakeQuantize layers are added before most layers to have quantized tensors before layers in a way that low-precision accuracy drop for 8-bit integer inference satisfies the specified threshold. The output of this stage is a quantized model. Quantized model precision is not changed, quantized tensors are in original precision range (fp32). FakeQuantize layer has levels attribute which defines quants count. Quants count defines precision which is used during inference. For int8 range levels attribute value has to be 255 or 256. To quantize the model, you can use the Post-Training Optimization Tool delivered with the Intel® Distribution of OpenVINO™ toolkit release package.

When you pass the quantized IR to the OpenVINO™ plugin, the plugin automatically recognizes it as a quantized model and performs 8-bit inference. Note, if you pass a quantized model to another plugin that does not support 8-bit inference but supports all operations from the model, the model is inferred in precision that this plugin supports.

Low Precision Transformation component to update the model to infer it in low precision:FakeQuantize layers to have quantized output tensors in low precision range and add dequantization layers to compensate the update. Dequantization layers are pushed through as many layers as possible to have more layers in low precision. After that, most layers have quantized input tensors in low precision range and can be inferred in low precision. Ideally, dequantization layers should be fused in the next FakeQuantize layer.Constant layers.

Information about layer precision is stored in the performance counters that are available from the Inference Engine API. For example, the part of performance counters table for quantized TensorFlow* implementation of ResNet-50 model inference on CPU Plugin looks as follows:

| layerName | execStatus | layerType | execType | realTime (ms) | cpuTime (ms) |

|---|---|---|---|---|---|

| resnet_model/batch_normalization_15/FusedBatchNorm/Add | EXECUTED | Convolution | jit_avx512_1x1_I8 | 0.377 | 0.377 |

| resnet_model/conv2d_16/Conv2D/fq_input_0 | NOT_RUN | FakeQuantize | undef | 0 | 0 |

| resnet_model/batch_normalization_16/FusedBatchNorm/Add | EXECUTED | Convolution | jit_avx512_I8 | 0.499 | 0.499 |

| resnet_model/conv2d_17/Conv2D/fq_input_0 | NOT_RUN | FakeQuantize | undef | 0 | 0 |

| resnet_model/batch_normalization_17/FusedBatchNorm/Add | EXECUTED | Convolution | jit_avx512_1x1_I8 | 0.399 | 0.399 |

| resnet_model/add_4/fq_input_0 | NOT_RUN | FakeQuantize | undef | 0 | 0 |

| resnet_model/add_4 | NOT_RUN | Eltwise | undef | 0 | 0 |

| resnet_model/add_5/fq_input_1 | NOT_RUN | FakeQuantize | undef | 0 | 0 |

The

exeStatuscolumn of the table includes possible values:

EXECUTED- layer was executed by standalone primitive,NOT_RUN- layer was not executed by standalone primitive or was fused with another operation and executed in another layer primitive.

The

execTypecolumn of the table includes inference primitives with specific suffixes. The layers have the following marks:

- Suffix

I8for layers that had 8-bit data type input and were computed in 8-bit precision- Suffix

FP32for layers computed in 32-bit precision

All Convolution layers are executed in int8 precision. Rest layers are fused into Convolutions using post operations optimization technique, which is described in Internal CPU Plugin Optimizations.