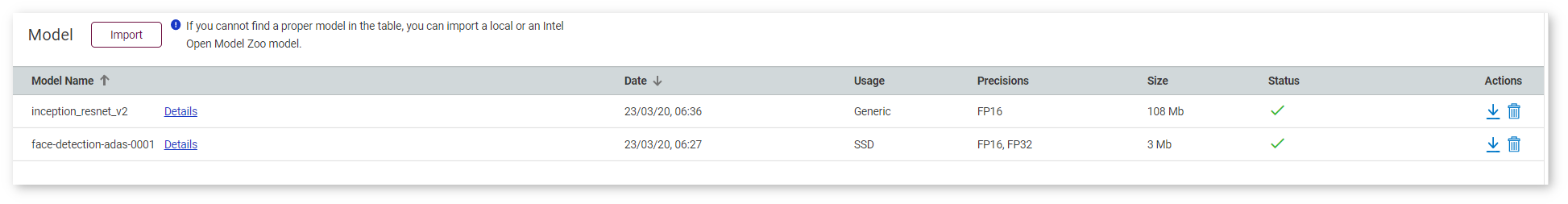

NOTE: If you have imported a model before, do not import it again. You can select it from the list of available models.

You can import original and the Open Model Zoo models. Click Import Model under the list of available models.

To import a model, follow the steps below:

- Upload a model

- Convert the model to Intermediate Representation (IR) — only for non-OpenVINO™ models

NOTE: To learn about the conversion logic, see the Model Optimizer documentation.

Once you import a model, you are directed back to the Create Configuration page, where you can select the imported model and proceed to select a dataset.

Supported Frameworks

DL Workbench supports the following frameworks whether uploaded from a local folder or imported from the Open Model Zoo.

| Framework | Original Models | Open Model Zoo |

|---|---|---|

| OpenVINO™ | ✔ | ✔ |

| Caffe* | ✔ | ✔ |

| MXNet* | ✔ | ✔ |

| ONNX* | ✔ | ✔ |

| TensorFlow* | ✔ | ✔ |

| PyTorch* | ✔ |

Use Models from Open Model Zoo

NOTE: Internet connection is required for this option. If you are behind a corporate proxy, set environment variables during the Install from Docker Hub* step.

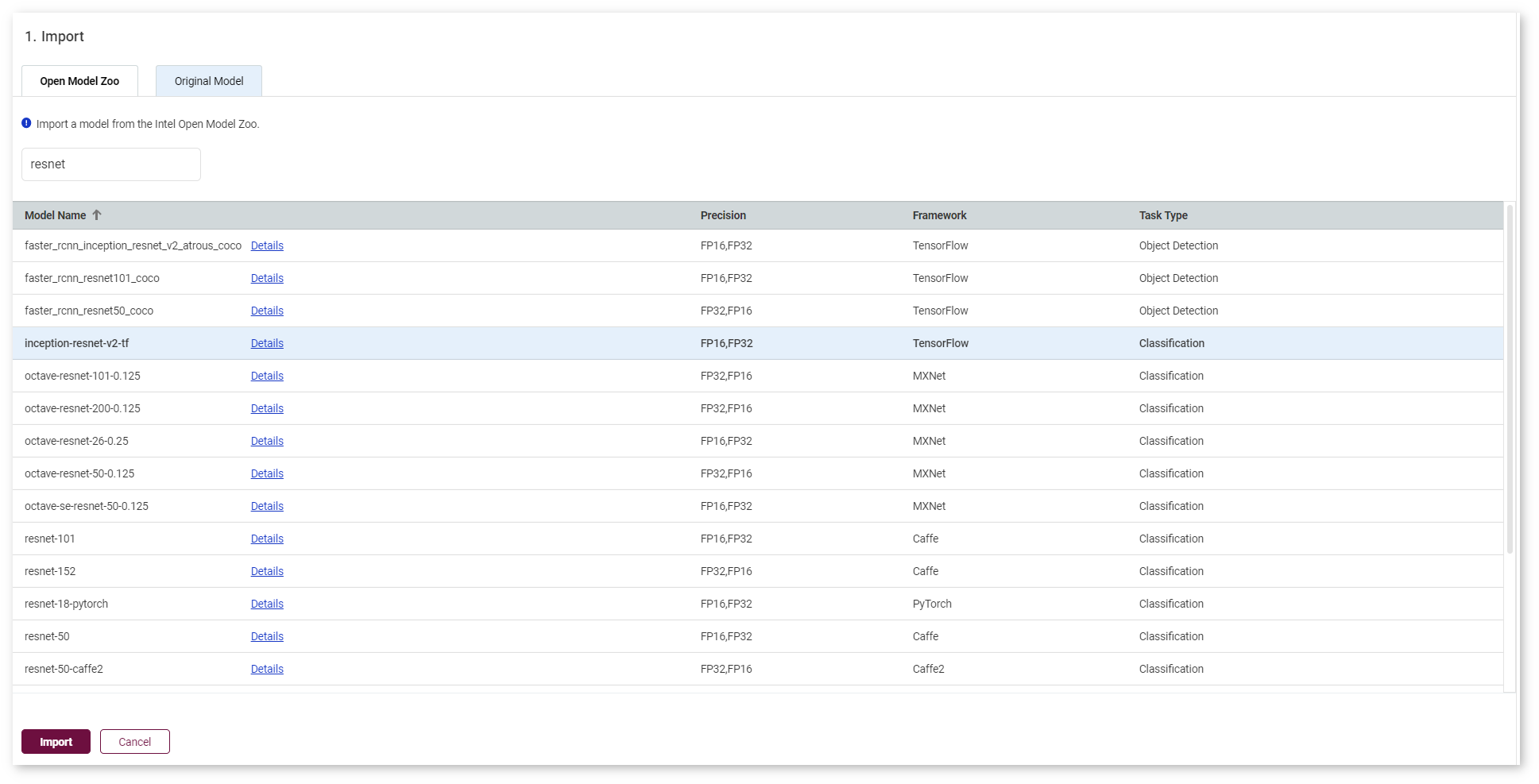

To use a model from the Open Model Zoo, go to the Open Model Zoo tab. Select a model and click Import:

TIP: Precision of models from the Open Model Zoo can be changed in the Conversion step.

Once you click Import, the Convert Model to IR section opens.

NOTE: To learn about the import process, see the Model Downloader documentation.

Upload Original Models

In the Original Model tab, you can upload original models stored in files on your operating system. The uploading process depends on the framework of your model.

Upload OpenVINO™ (IR) Models

To import an OpenVINO™ model, select the framework in the drop-down list, upload an .xml file and a .bin file, provide the name, and click Import. Since the model is already in the IR format, select the imported model and proceed to select a dataset.

Upload Caffe* Models

NOTE: To learn more about Caffe* models, refer to the article.

To import a Caffe model, select the framework in the drop-down list, upload an [.prototxt] file and a .caffemodel file, and provide the name.

Once you click Import, the tool analyzes your model and opens the Convert Model to IR form with prepopulated conversion settings fields, which you can change.

Upload MXNet* Models

NOTE: To learn more about MXNet models, refer to the article.

To import an MXNet model, select the framework in the drop-down list, upload an .json file and a .params file, and provide the name.

Once you click Import, the tool analyzes your model and opens the Convert Model to IR form with prepopulated conversion settings fields, which you can change.

Upload ONNX* Models

NOTE: To learn more about ONNX models, refer to the article.

To import an ONNX model, select the framework in the drop-down list, upload an .onnx file, and provide the name.

Once you click Import, the tool analyzes your model and opens the Convert Model to IR form with prepopulated conversion settings fields, which you can change.

Upload TensorFlow* Models

NOTE: To learn about the difference between frozen and nob-frozen TensorFlow models, refer to the Freeze Tensorflow models and serve on web.

Upload Frozen TensorFlow Models

To import a frozen TensorFlow model, select the framework in the drop-down list, upload a .pb or .pbtxt file, provide the name, and make sure the Is Frozen Model box is checked.

You can also check the Use TensorFlow Object Detection API box and upload a pipeline configuration file.

Upload Non-Frozen TensorFlow Models

To import a non-frozen TensorFlow model, select the framework in the drop-down list, provide the name, and uncheck the Is Frozen Model box. Then select input files type: Checkpoint or MetaGraph.

If you select the Checkpoint file type, provide the following files:

.pbor.pbtxtfile.checkpointfile

If you select the MetaGraph file type, provide the following files:

.metafile.indexfile- data file

Regardless of a file type, you can also check the Use TensorFlow Object Detection API box and upload a pipeline configuration file.

Once you click Import, the tool analyzes your model and opens the Convert Model to IR form with prepopulated conversion settings fields, which you can change.

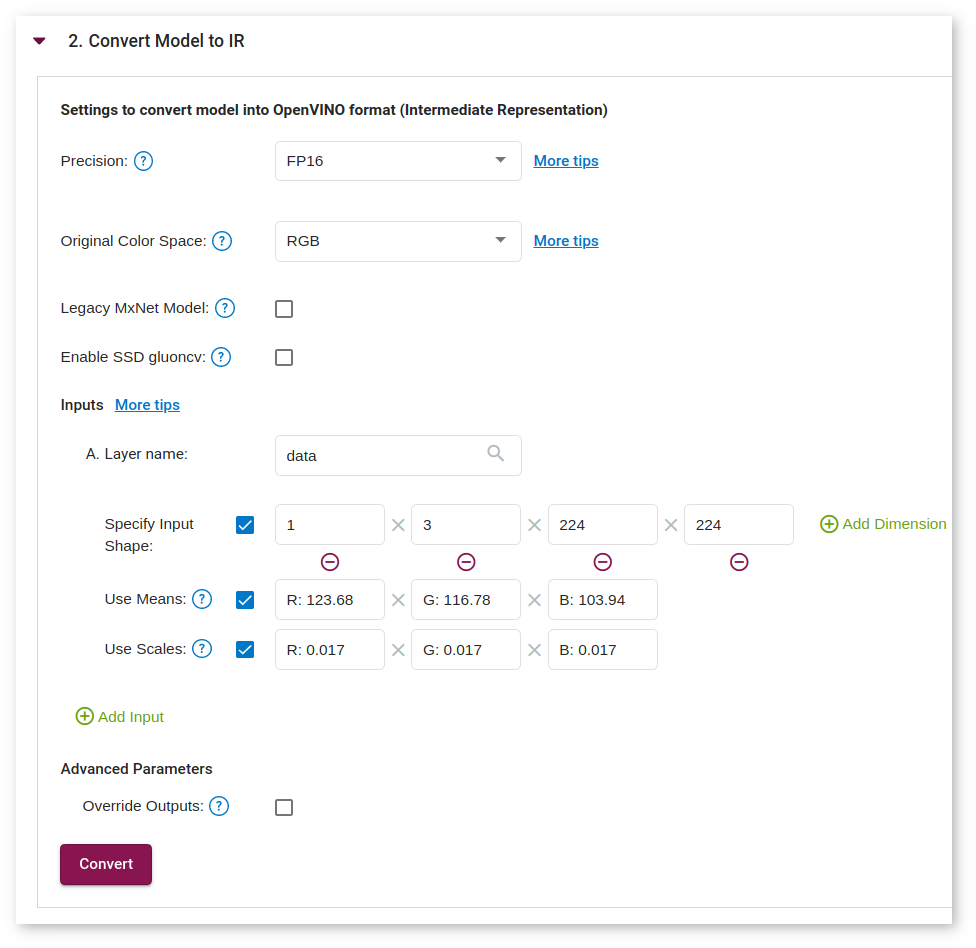

Convert Models to Intermediate Representation (IR)

You are automatically directed to the Convert Model to IR step after uploading a model. Besides general framework-agnostic parameters, you might need to specify framework-specific or advanced framework-agnostic parameters.

NOTE: For details on converting process, refer to Converting a Model to Intermediate Representation.

General Framework-Agnostic Parameters

Refer to the table below to learn about parameters shared by all frameworks.

| Parameter | Values | Description |

|---|---|---|

| Batch number | 1-256 | How many images at a time are propagated to a neural network |

| Precision | FP16 or FP32 | Precision of a converted model |

| Original color space | RGB or BGR | Color space of an original model |

Framework-Specific Parameters

When importing TensorFlow* models, provide an additional pipeline configuration file and choose a model conversion configuration file. For details, refer to Import Frozen TensorFlow SSD MobileNet v2 COCO Tutorial.

Inputs

NOTE: Input layers are required for MXNet* models.

You can use default input layers or cut a model by specifying the layers you want to consider as input ones. To change default input layers, provide information about the layers:

TIP: To add more than one layer, click the Add Input button. To remove layers, click on the red remove sign next to the name of a layer.

- Name

- Input shape. To add or remove dimensions, click Add Dimension or the purple minus sign respectively.

- Optionally:

- Use means: mean values to be used for the input image per channel

- Use scales: scale values to be used for the input image per channel

NOTE: To learn more about means and scales, see Converting a Model Using General Conversion Parameters.

Advanced Framework-Agnostic Parameters

You can use default output layers or cut a model by specifying the layers you want to consider as output ones. To change default input layers, check the Override Outputs box and provide the name of a layer.

TIP: To add more than one layer, click the Add Output button. To remove layers, click on the red remove sign next to the name of a layer.

To find out the names of layers, view text files with a model in an editor or visualize the model in the Netron neural network viewer.

NOTE: For more information on preprocessing parameters, refer to Converting a Model Using General Conversion Parameters.

Convert Original Caffe* or ONNX* Model

To convert a Caffe* or an ONNX* model, provide the following information in the General Parameters section:

- Batch

- Precision

- Color space

You can also use default values by checking the box in the Advanced Parameters section.

NOTE: For details on converting Caffe and ONNX models, refer to Converting a Caffe* Model and Converting an ONNX* Model.

Once you click Convert, you are directed back to the Create Configuration page, where you can select the imported model and proceed to select a dataset.

Convert Original MXNet* Model

To convert an MXNet model, provide the following information in the General Parameters section:

- Batch

- Precision

- Color space

In the same section, specify framework-specific parameters by checking the boxes:

- Legacy MXNet Model: Enables MXNet loader to make a model compatible with the latest MXNet version. Check only if your model was trained with MXNet version lower than 1.0.0.

- SSD GluonCV: Enables transformation for converting the GluonCV SSD topologies. Check only if your topology is one of SSD GluonCV topologies.

Checking the Use Default Values box in the Advanced Parameters section allows you to choose to use inputs and/or outputs.

Once you click Convert, you are directed back to the Create Configuration page, where you can select the imported model and proceed to select a dataset.

NOTE: For details on converting MXNet models, refer to Converting an MXNet* Model.

Convert Original TensorFlow* Model

To convert a TensorFlow model, provide the following information in the General Parameters section:

- Batch

- Precision

- Color space

Checking the Use Default Values box in the Advanced Parameters section allows you to choose to use inputs and/or outputs.

Once you click Convert, you are directed back to the Create Configuration page, where you can select the imported model and proceed to select a dataset.

NOTE: For details on converting TensorFlow models, refer to Converting a TensorFlow* Model.

Convert Model from Open Model Zoo

To convert a model from the Open Model Zoo, provide the precision in the General Parameters section.

Once you click Convert, you are directed back to the Create Configuration page, where you can select the imported model and proceed to select a dataset.

NOTE: If you are behind a corporate proxy, set environment variables during the Install from Docker* Hub step.