Model Optimizer is a cross-platform command-line tool that facilitates the transition between the training and deployment environment, performs static model analysis, and adjusts deep learning models for optimal execution on end-point target devices.

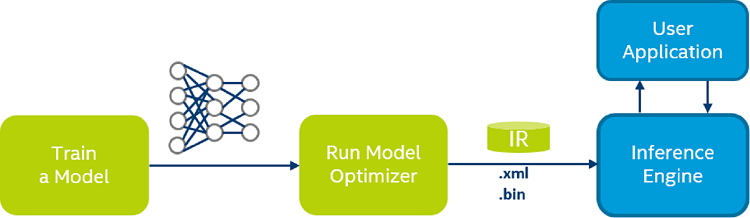

Model Optimizer process assumes you have a network model trained using a supported deep learning framework. The scheme below illustrates the typical workflow for deploying a trained deep learning model:

Model Optimizer produces an Intermediate Representation (IR) of the network, which can be read, loaded, and inferred with the Inference Engine. The Inference Engine API offers a unified API across a number of supported Intel® platforms. The Intermediate Representation is a pair of files describing the model:

.xml- Describes the network topology.bin- Contains the weights and biases binary data.

What's New in the Model Optimizer in this Release?

- ONNX*:

- Added support of the following ONNX* operations: Abs, Acos, Asin, Atan, Cast, Ceil, Cos, Cosh, Div, Erf, Floor, HardSigmoid, Log, NonMaxSuppression, OneHot, ReduceMax, ReduceProd, Resize (opset 10), Sign, Sin, Sqrt, Tan, Xor

- Added support of the following ONNX* model zoo:

- SSD ResNet https://onnxzoo.blob.core.windows.net/models/opset_10/ssd/ssd.onnx.

- Mask RCNN https://onnxzoo.blob.core.windows.net/models/opset_10/mask_rcnn/mask_rcnn_R_50_FPN_1x.onnx. Refer to the documentation on how to convert the model.

- TensorFlow*:

- Added optimization transformation which detects Mean Value Normalization pattern for 5D input and replaces it with a single MVN layer.

- Added ability to read TensorFlow 1.X models when TensorFlowF 2.x is installed. TensorFlow 2.X models are not supported.

- Changed command line to convert GNMT model. Refer to the GNMT model conversion article for more information.

- Deprecated "--tensorflow_subgraph_patterns", "--tensorflow_operation_patterns" command line parameters. The TensorFlow offload feature will be removed from the future releases.

- Added support of the following TensorFlow* operations: Bucketize (CPU only), Cast, Cos, Cosh, ExperimentalSparseWeightedSum (CPU only), Log1p, NonMaxSuppressionV3, NonMaxSuppressionV4, NonMaxSuppressionV5, Sin, Sinh, SparseToDense (CPU only), SparseReshape(removed when input and output shapes are equal), Tan, Tanh.

- Added support of the following TensorFlow* models:

- Wide and Deep models family (CPU only).

- MobileNetV3.

- MXNet*:

- Added support of the following MXNet* operations: UpSampling with bilinear mode, Where, _arange, _contrib_AdaptiveAvgPooling2D, div_scalar, elementwise_sub, exp, expand_dims, greater_scalar, minus_scalar, repeat, slice, slice_like, tile.

- Added support of the following MXNet* models: YoloV3 model from the GluonCV model zoo.

- Kaldi*:

- The "--remove_output_softmax" command line parameter now triggers removal of final LogSoftmax layer in addition to a pure Softmax layer.

- Added support for the following Kaldi* operations: linearcomponent, logsoftmax.

- Added support of the following Kaldi* models:

- Aspire TDNN http://kaldi-asr.org/models/1/0001_aspire_chain_model.tar.gz. Refer to the documentation on how to infer the model with use of a speech_sample.

- Librispeech nnet3 https://github.com/ryanleary/kaldi-test/releases/download/v0.0/LibriSpeech-trained.tgz

- Common changes:

- Model Optimizer generates IR version 10 by default (except for the Kaldi* framework for which IR version 7 is generated) with significantly changed operations semantic. The command line "--generate_deprecated_IR_V7" could be used to generate older version of IR. Refer to the documentation for the specification of a new operations set.

- "--tensorflow_use_custom_operations_config" has been renamed to "--transformations_config". The old command line parameter is deprecated and will be removed in the future releases.

- Added ability to specify input data type using the "--input" command line parameter. For example, "--input placeholder{i32}[1 300 300 3]". Refer to the documentation for more examples.

- The IR v7 XML file format has been updated and now layer output port has an attribute "precision" with data type of the produced tensor.

- Added support for FusedBatchNorm operation in training mode.

- Added optimisation transformation to remove useless Concat+Split sub-graphs.

- A number of graph transformations were moved from the Model Optimizer to the Inference Engine.

- Fixed networkX 2.4+ compatibility issues.

NOTE: Intel® System Studio is an all-in-one, cross-platform tool suite, purpose-built to simplify system bring-up and improve system and IoT device application performance on Intel® platforms. If you are using the Intel® Distribution of OpenVINO™ with Intel® System Studio, go to Get Started with Intel® System Studio.

Table of Content

- Introduction to Intel® Deep Learning Deployment Toolkit

- Preparing and Optimizing your Trained Model with Model Optimizer

- Configuring Model Optimizer

- Converting a Model to Intermediate Representation (IR)

- Converting a Model Using General Conversion Parameters

- Converting Your Caffe* Model

- Converting Your TensorFlow* Model

- Converting BERT from TensorFlow

- Converting GNMT from TensorFlow

- Converting YOLO from DarkNet to Tensorflow and then to IR

- Converting FaceNet from TensorFlow

- Converting DeepSpeech from TensorFlow

- Converting Language Model on One Billion Word Benchmark from TensorFlow

- Converting Neural Collaborative Filtering Model from TensorFlow*

- Converting TensorFlow* Object Detection API Models

- Converting TensorFlow*-Slim Image Classification Model Library Models

- Converting CRNN Model from TensorFlow*

- Converting Your MXNet* Model

- Converting Your Kaldi* Model

- Converting Your ONNX* Model

- Model Optimizations Techniques

- Cutting parts of the model

- Sub-graph Replacement in Model Optimizer

- Supported Framework Layers

- Intermediate Representation and Operation Sets

- Operations Specification

- Custom Layers in Model Optimizer

- Model Optimizer Frequently Asked Questions

- Known Issues

Typical Next Step: Introduction to Intel® Deep Learning Deployment Toolkit