Disclaimer

Inference Engine with low-precision 8-bit integer inference is in a feature preview and requires the following prerequisites to be satisfied:

- Inference Engine CPU Plugin must be built with the Intel® Math Kernel Library (Intel® MKL) dependency. In the Intel Distribution of OpenVINO it is satisfied by default, this is mostly the requirement if you are using OpenVINO available in Open Source, because Open Source OpenVINO can be also built with OpenBLAS that is unacceptable if you want to use 8-bit integer inference.

- Intel® platforms that support at least one extension to x86 instruction set from the following list:

- Intel® Advanced Vector Extensions 512 (Intel® AVX-512)

- Intel® Advanced Vector Extensions 2.0 (Intel® AVX2)

- Intel® Streaming SIMD Extensions 4.2 (Intel® SSE4.2)

- A model must contain at least one activation layer of Rectified Linear Unit (ReLU) type. If this requirement is not satisfied, 8-bit inference is unavailable for this particular model in Inference Engine.

The 8-bit inference feature was validated on the following topologies:

-

Classification models:

- Caffe Inception v1, Inception v4

- Caffe ResNet-50 v1, ResNet-101 v1

- Caffe MobileNet

- Caffe SqueezeNet v1.0, SqueezeNet v1.1

- Caffe VGG16, VGG19

- TensorFlow Inception v3, Inception v4, Inception ResNet v2

- Caffe DenseNet-121, DenseNet-161, DenseNet-169, DenseNet-201

-

Object detection models:

- Caffe SSD_SqueezeNet

- Caffe SSD_MobileNet

- Caffe SSD_Vgg16_300

- TensorFlow SSD Mobilenet v1, SSD Mobilenet v2

-

Semantic segmentation models:

- Unet2D

-

Recommendation system models:

- NCF

Introduction

A lot of investigation was made in the field of deep learning with the idea of using low precision computations during inference in order to boost deep learning pipelines and gather higher performance. For example, one of the popular approaches is to shrink the precision of activations and weights values from fp32 precision to smaller ones, for example, to fp11 or int8. For more information about this approach, refer to Brief History of Lower Precision in Deep Learning section in this whitepaper.

8-bit computations (referred to as int8) offer better performance compared to the results of inference in higher precision (for example, fp32), because they allow to load more data into a single processor instruction. Usually the cost for significant boost is a reduced accuracy. However, it is proved that the drop in accuracy can be negligible and depends on task requirements, so that the application engineer can set up the maximum accuracy drop that is acceptable.

Current Inference Engine solution for low-precision inference uses Intel MKL-DNN, which supports inference of the following layers in 8-bit integer computation mode:

- Convolution

- FullyConnected (AVX-512 only)

- ReLU

- ReLU6

- Pooling

- Eltwise

- Concat

- Resample

This means that 8-bit inference can only be performed with the CPU plugin on the layers listed above. All other layers are executed in the format supported by the CPU plugin: 32-bit floating point format (fp32).

Low-Precision 8-bit Integer Inference Workflow

For 8-bit integer computations, the original model (or its Intermediate Representation) must be in the fp32 format. In order to perform calculation of layers in the int8 format, the input data (input blob) and weights of the given layer (also biases and/or other blobs of the layer) must be quantized - transitioned from fp32 to int8 format. The quantization process converts model input into a lower-precision format. The precision and accuracy factors are specified by the scale and rounding-mode respectively. Read more about mathematical computations under the hood in the white paper.

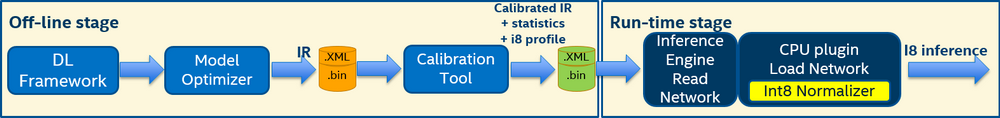

8-bit inference pipeline includes two stages (also refer to the figure below):

- Offline stage, or model calibration. During this stage, scale factors and execution profiles are defined for each layer in a way that low-precision accuracy drop for 8-bit integer inference satisfies the specified threshold. The output of this stage is a calibrated model.

- Run-time stage. This stage is an internal procedure of the CPU Plugin. During this stage, the calibrated model is loaded to the plugin. For each layer that obtain the corresponding execution profile, the plugin normalizes the weights (and biases, if present). It also adds scale factors at the particular places of the model defined by the internal algorithm with regards to the maximum performance and minimum number of extra layout manipulations.

Offline Stage: Model Calibration

One of the vital components for successful data quantization is a set of scale factors for each layer that supports 8-bit computations. These scales are obtained from statistics of layers activations collected by the OpenVINO Calibration Tool on a calibration dataset. The calibration dataset contains images and can be a subset of the validation set. A small fraction of images from validation dataset (1-5%) is enough to create a calibration dataset. For more information on the dataset preparation, refer to the Accuracy Checker Tool.

NOTE: OpenVINO 2019 R1 release introduced a Python* version of the Calibration Tool. The C++ version of the Calibration Tool is deprecated.

To calibrate a model, the Calibration Tool preforms the following steps:

-

Collecting layer statistics (minimum and maximum values of layers activations) and baseline of accuracy metric for

fp32inference. Note that accuracy metric depends on the type of the calibrated model. For classification networks, top-1 metric is used; for object detection models, mAP metric is used. - Collecting accuracy metric for 8-bit inference. During this step, different filters are applied to the collected activations statistics to remove activation outliers (isolated values that are very different from the majority of known values). If the resulting accuracy satisfies the required level with respect to the accepted accuracy drop delta, the Calibration Tool stops the calibration process.

- Collecting accuracy drop information on the calibration dataset for each layer that supports 8-bit computations using the Normalized Root-Mean-Square Deviation metric. This metric allows to put all layers in decreasing order so that it is clear which layers bring the biggest accuracy drop.

-

Eliminating layers with the largest accuracy drop from 8-bit computation by switching them back to

fp32mode. After eliminating one layer, the Calibration Tool computes the accuracy of this configuration. Until the resulting accuracy satisfies the required level with respect to the accepted accuracy drop delta (which equals 1% by default), the tool continues switching layers back tofp32computations in the order defined in the step 3. However, calibration of the model with all layers returned tofp32computations is meaningless, so that this plays a role of hard stop of the whole calibration process.

When the calibration completes, the tool writes the resulting statistics and the modified Intermediate Representation (IR) to the .xml file. The tool does not change the IR structure, so the layers hierarchy is the same. However, the layers that are chosen to be executed in 8-bit format are marked with the appropriate profile attribute, and their statistics is stored at the end of the .xml file.

When you pass the calibrated IR to the CPU plugin, the plugin automatically recognizes it as calibrated and performs the 8-bit inference. At the same time, other plugins do not support 8-bit inference, so if you pass the calibrated model to them, statistics and additional attributes are ignored and the model is inferred in the precision that this plugin supports.

Run-Time Stage: Quantization

This is the second stage of the 8-bit integer inference. After you load the calibrated model IR to the CPU plugin, it performs quantization for 8-bit inference:

- Inserts the corresponding scale factors to transform layer inputs precision to unsigned

int8data type and normalize output layers to unsigned 8-bit integer type, to signed 8-bit integer type, or to 32-bit floating data type - Normalizes the weights of convolution layers to fit the signed 8-bit integer data type

- Normalizes the biases of convolution layers to fit the signed 32-bit integer data type

Performance Counters

Information about layer precision is stored in the performance counters that are available from the Inference Engine API. The layers have the following marks:

- Suffix

I8for layers that had 8-bit data type input and were computed in 8-bit precision - Suffix

FP32for layers computed in 32-bit precision

For example, the performance counters table for the Inception model can look as follows:

The execType column of the table includes inference primitives with specific suffixes.